Thoughts on the raspberry pi 4 + 10k TCP connections with Vert.x

Background Context

I recently picked up a Raspberry Pi 4 [4GB edition]. I had been debating picking up a Linux system for some dev work. I don’t like using Docker right from the get go as a dev environment. My general workflow for any project goes in the following steps - for a Kotlin project

- Run from IDE

- Run from terminal via build tool such as maven or bazel

- Compile to a fat jar and run using

java -jar <project>.jar - Run fat jar on a Linux server [now possible]

- Run as a docker container

- Run as a Kubernetes deployment

I know many devs have jumped directly to step 5 but I think it is still important to make sure that steps 1-4 are compiling and running properly. Step 1 is still important because other devs, who do not have context, will navigate to your project [in their IDE] to add new features or fix bugs. Very likely, they will add a bunch of print statements in the project and start making requests to see what the internals look like. I guess you could use the documentation on confluence but sometimes it is easier and faster to just log-dump the request. They should to be able to spin the service up easily when they have no context. The project should not fail to startup because a required environment variable was not set - set a sane default, log the behaviour [such as persistence disabled] and proceed.

If for any reason you think this is a lot of work - I present you Margaret Hamilton the lead developer on the Apollo mission standing next to the hard copy of the code base.

VPS vs Raspberry Pi

I was thinking of getting a digital ocean VPS - with the 1CPU 1GB ram costing about $5/month or $20 for the 2CPU 4GB ram. The monthly cost was definitely a factor since I was planning on running multiple things 24/7 and not just one small app. The advantage was being able to easily expose it on the internet for demo reasons. For an in house server, I would have to port forward on my router, which I was [and still am] not a big fan of. Ngrok [https://ngrok.com] definitely could be a quick workaround. Another concern was that I love doing benchmarks and load testing. I did not want to cause any DDoS triggers on DO’s end getting my account suspended or anything like that.

I picked up the CanaKit Raspberry Pi 4 Starter Kit (4GB RAM) from Amazon. Definitely a bit more expensive than I thought it was going to be all things considered. It was relatively easy to set up the heat sinks and the case. Hooked it up to a keyboard, mouse, and my TV to install the OS. I don’t need a GUI so I installed the headless Raspberry Pi OS which is debian based [apt - not yum]. raspberrypi.local is set up to connect to the Pi.

1 | pi@raspberrypi ➜ ~ uname -a |

What’s running on it?

A server is useless if it is not running anything. I had a few things I knew I was going to set up. The first was a DNS level ad blocker pi hole [https://pi-hole.net]. Gave my Pi a static IP via DHCP reservation in my Rogers router and set up pi hole to block out any ads for all devices that used it as a DNS Server. Just this one application alone made the pi worth it. Pi hole helps quite a bit. Doesn’t block everything [like ads on YouTube in mobile] but it is good enough. Also caches the DNS responses so browsing seems a bit zippier too. It is PHP based so it needs a webserver to call out to the php scripts - lighthttpd in this case. Not a big fan of this. Why are we still using PHP + web servers in 2020??

List of other things I set up not including things like zsh and htop:

- OpenJDK [OpenJ9 seems to have limited to no support for ARM]

- Redis

- Postgres

- Grafana

- Prometheus

- MongoDB

- InfluxDB [docker container]

- Docker registry [docker container]

- Node.js for Vue.js dev work

- Caddy

- Nginx [inactive]

- rustc and go

I set up gitea [ https://gitea.io/en-us ] which is a self hosted git server as well - but the SSH permissions to push/pull got borked :(

I installed caddy as a reverse proxy for the pi to route to various services I have running behind it. Caddy is similar to nginx but with slightly easier configurations and automatic HTTPS setup.

1 | # Caddyfile |

1 | ➜ ~ curl -k https://raspberrypi.local/ |

Load testing and 10k connections

Running wrk on Caddy [with logging turned on] I got the following results

1 | $ wrk -t 32 -c 64 -d20s https://raspberrypi.local/ |

Next I setup a Vert.x TCP server and used tcpkali [ https://github.com/satori-com/tcpkali ] to see if the Pi could handle 10k concurrent connections.

As always, I opted to use vert.x to create the tcp server. A tcp server in vert.x can be spun up very easily with the following lines of code

1 | val vertx = Vertx.vertx() |

I also added some prometheus metrics:

- Node exporter to get the system information of the pi during the load test

- Micrometer bindings to get the JVM information

- Vert.x metrics to get the net server connection count + a custom packet received counter

The entire main function can be viewed in here - https://gist.github.com/asad-awadia/93ba7c1eba6d6b8a8a84e9f20721d983

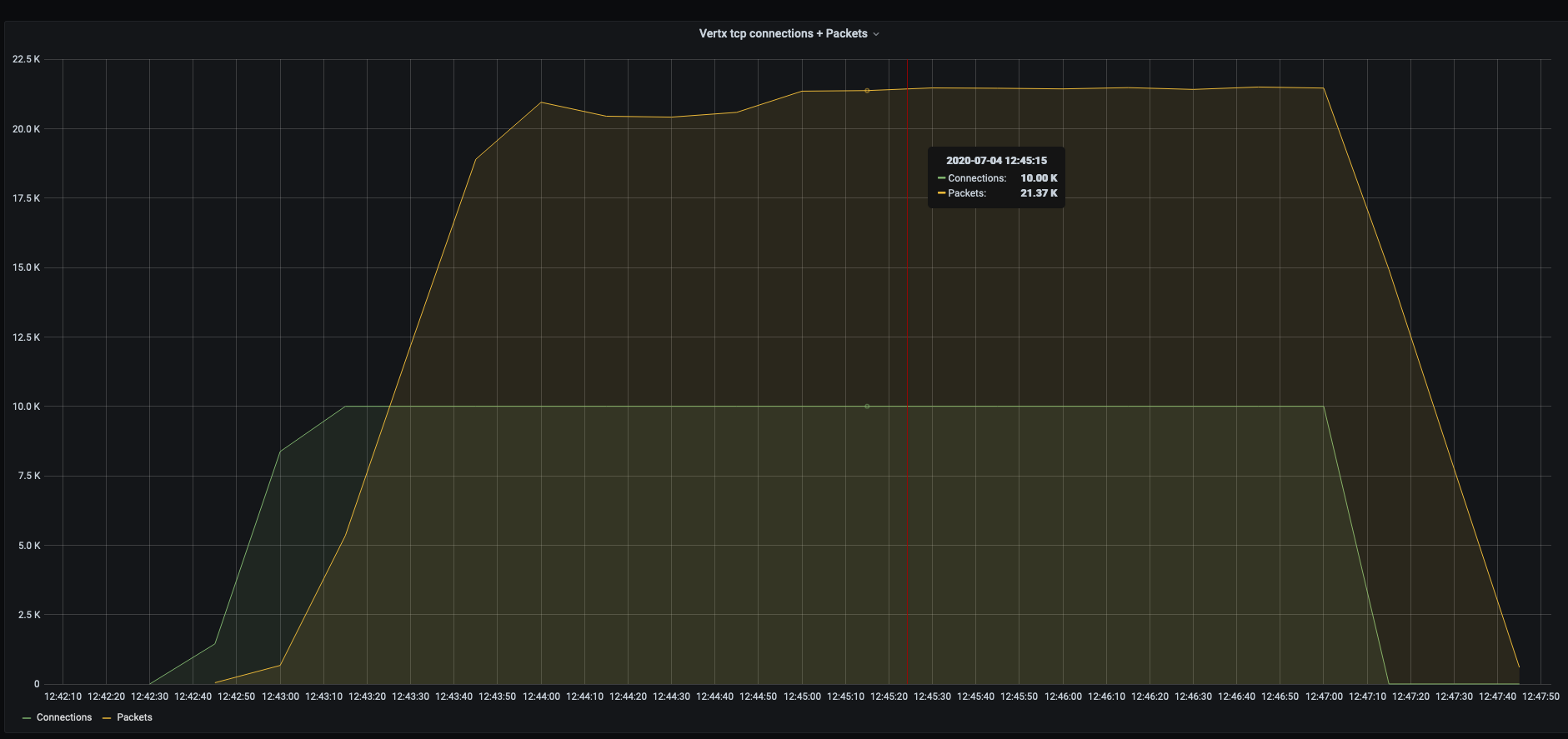

Then the test began using tcpkali tcpkali -m '$' -r 10 -c 10k -T 240s --connect-rate=1000 <pi-ip>:9091

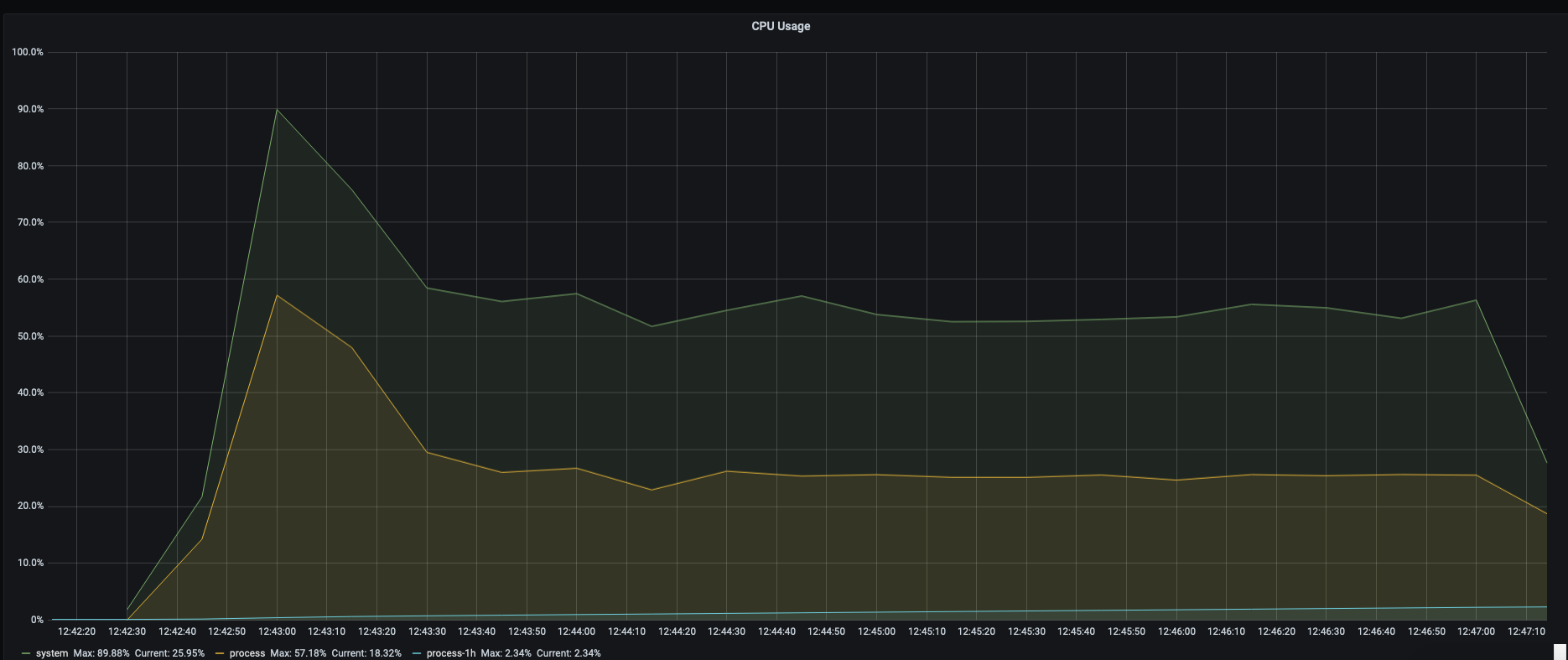

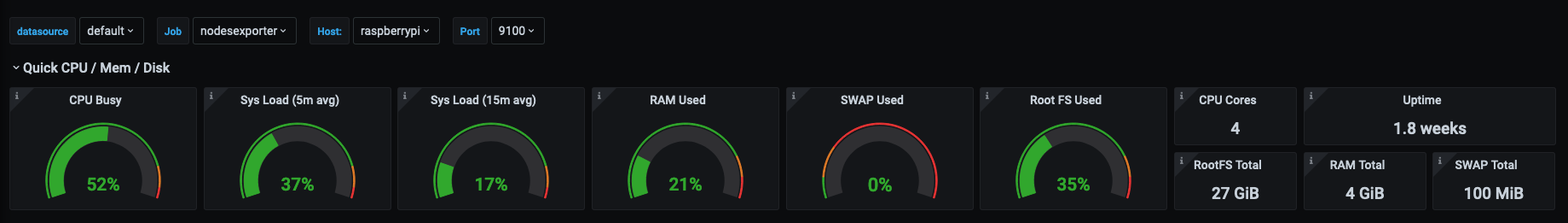

Some grafana screenshots:

Vert.x net server connections + packet received

CPU usage

JVM memory used - vert.x is really memory efficient

Node exporter quick stats

For a tiny little device it seems more than capable for a single dev. I would not use it as a build server for your company’s production apps but to have an always on linux system available is really nice to have.